Posts

-

MIT Mystery Hunt 2018

CommentsI plan to edit in puzzle links later.

After going in-person for the past 2 Hunts, I decided not to fly-in for the 2018 Hunt. The short explanation is that I always procrastinate on flights, and at the time I needed to make a decision, work was very, very busy. There were several Code Red, “implement this or nothing is possible” issues.

The issue wasn’t that I didn’t have time. It was that I’d have to take days off on short notice, which would have thrown a bunch of time estimate off. If I had planned Hunt ahead of time, and made sure everyone knew I would be out for certain days, I would have flown in. Lesson learned: hobbies don’t just fall into your life, you make time for your hobbies.

I hunted with ✈✈✈ Galactic Trendsetters ✈✈✈ again. I suppose at this point, it’s my home team, thanks to connections from the high school math contest network.

If you want to avoid spoilers, you should stop reading now.

* * *

Let’s start with broad, overarching feelings about Hunt.

I think Hunt was pretty good! I was worried that a team would finish somewhat quickly, given the warning that Hunt would be shorter than previous years, and that it would be best if your team wasn’t too large. My expectation was that it would be fewer puzzles, team sizes wouldn’t change, the winning team would solve Hunt pretty quickly, and then the rest of us would get there along the way. The initial Emotions round did nothing to disprove this. It turned out that the Island puzzles were a lot harder, big teams had decided to split ahead of Hunt, this was still MIT Mystery Hunt and it was still going to be A Journey.

Number of puzzles isn’t the only metric that matters in Hunt. Unlock structure matters too. If it’s harder to unlock a bunch of puzzles, it’s harder to parallelize solving, which slows down Hunt and leads to bottlenecking behavior. The easy puzzles get solved instantly, leaving just the hard ones. It’s especially bad for large teams, because the odds you get to see a puzzle before it gets solved just gets vanishingly small.

On the flip side, if it’s too easy to unlock a ton of puzzles, then small teams can get overwhelmed, and big teams just steamroll everything. It’s all a bunch of trade-offs.

Galactic reported 60 people for the scavenger hunt this year, and it definitely felt like we were gated on unlocks. This was especially strong in the Games round, which was our first island. The unlock structure was linear, until you unlocked a puzzle close to the center. It quickly became clear that if we got stuck on any single puzzle, it would potentially block unlocks for a ton of other puzzles. And in fact, that’s what happened for us: both The Year’s Hardest Crossword and Sports Radio were stuck for a while, so we had to rely entirely on solving the linear puzzles up to All the Right Angles before it felt like we were out of danger. In general, I would have liked to see more redundancy in the unlocks for that round.

I have basically zero complaints about the rest of the Hunt. I really like the art aesthetic of the Pokemon round, especially the way it contrasted with the look of the rest of the Hunt. And all the puzzles felt very fair to me.

On to specific stories!

* * *

In the days leading up to Hunt, I made a bingo generator. It seemed like a cute idea, and the site was entirely static, which made it easy to piggyback off Github pages. Unfortunately, I didn’t get a Bingo. I needed “Puzzle is unsolved by the end of Hunt” to get there, and we self-torpedoed my chances by using our spare Buzzy Bucks to buy solutions to all remaining puzzles.

Most recent Hunts have a free answer system that lets you sweep up unsolved puzzles. I’m not sure a zero-solve puzzle is even possible in a modern Hunt.

This was my 6th Mystery Hunt, and I continue to feel like I’m bad at puzzles. I historically have a bad track record at getting a-has. Here’s my main contributions every hunt.

- Telling people we should read the flavor text.

- Telling people we should read the puzzle title.

- Trying to brute-force puzzles by matching partials to whatever word lists I have, and failing.

- Counting things.

- Looking at metapuzzles early, on the off chance they’re easy to solve with partial info.

- Getting really anal about organizing existing data and explaining current puzzle theories.

The way this works out is that I basically never get to do “hero-solves”, because other people hero-solve faster than I do. I feel more like the grease that gets all the other cogs to keep spinning. But, who knows, maybe I underestimate my solving skills.

I spent my Friday morning to afternoon trying to do work while spectating our progress. This was a terrible idea, because I didn’t do much work, and also didn’t get to work on puzzles. I ended up skipping all of the Emotions round. The extent of my contribution was opening the metas, looking at partial progress, and thinking, “oh yeah that’s definitely right.” For the Fear meta in particular, several people on the team had gotten suspicious when the Health & Safety Quiz was mailed to everybody, and got very suspicious when HQ sent a deliberately vague reply as to whether it was a puzzle or not. So when we saw Fear’s flavor text, we got the idea pretty quickly.

Our unlock order was Games, Sci-Fi, Pokemon, Hacking. The first two were by popular vote. By the time we unlocked the third island, it was 1:30 AM on Saturday. Local people didn’t want to do on-campus puzzles at night, and remote people didn’t want to unlock on-campus puzzles because they wanted to have puzzles to work on. This ended up backfiring a bit, because on Saturday night, the only puzzles open were longstanding Games puzzles and physical puzzles, which left little for people who weren’t interested in either. At least it gave me an excuse to go to bed.

The first puzzle I really looked at was Shift, which was cute. I thought “blue shift, red shift”, but didn’t think much of it. Someone else figured out that “gamma” should be shifted to “delta”, I realized “blue shift, red shift” was actually right, and we cleaned it up from there.

I spent a bunch of time on All The Right Angles. One nice thing about crossword-like puzzles is that you can contribute even if you only figure out a few clues. We got the billiards and substituion gimmick pretty quickly, but had trouble getting SIXTUS to fit in our grid. Eventually, someone figured out that “LOUIS IX isn’t LOUIS 9, it’s LOUI6, the cheeky bastards”. After filling in the grid, we drew the billiard path, and someone joked we should call in EIGHT because the path was a figure eight. That gave people some bad flashbacks to SEVEN WONDERS from last year. Luckily, the answer was not EIGHT.

Walk Across Some Dungeons 2 was fun. I was on it instantly, but I moved to other puzzles when I realized I was bad at Sokoban.

When I saw A Tribute: 2010-2017 get unlocked, I immediately thought, “oh sick, I bet this is the puzzle referencing old Hunts”, and then I looked, and it was a puzzle referencing old Hunts. Validation! I didn’t manage to ID any of the old puzzles, and I didn’t solve any of the segments either, but I tried!

I contributed basically zero to the Sci-Fi round. The only thing I did was throw backsolve attempts at the metas now and then.

In the Pokemon round, the first puzzle I worked on was The Advertiser. We figured out they were all TMs, identified them, and then were told it was a metapuzzle. We solved it with 2 answers.

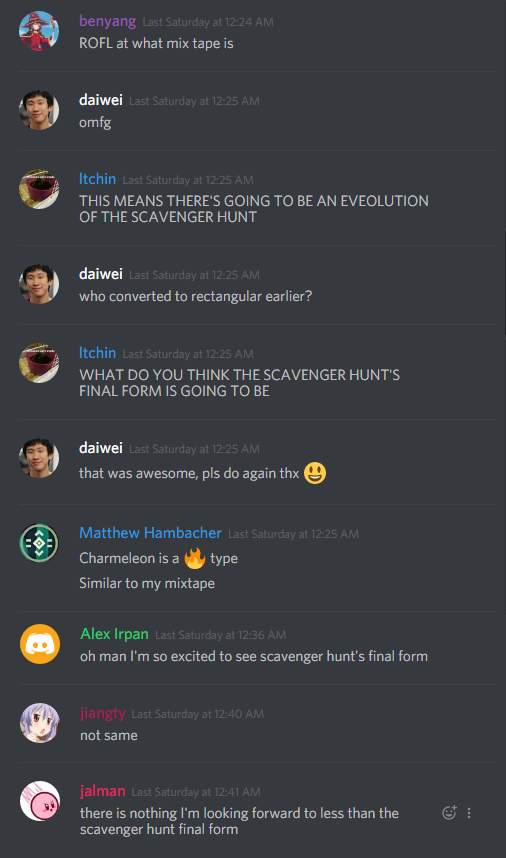

The first regular puzzle we solved in Pokemon was 33 RPM, so Mix Tape was the puzzle where we discovered the evolution gimmick, which was pretty great.

I puzzled with another remote solver, and he was just getting so mad at Mix Tape. It was past midnight, and we had the audio, but the quality was bad. The songs weren’t Shazamable, which was just all kinds of pain. Our image pipeline was “take the spiral image” \(\rightarrow\) “unroll into a rectangular image” \(\rightarrow\) “turn into audio”, but small errors accumulated. In retrospect, reading directly from the raw spiral would have been better.

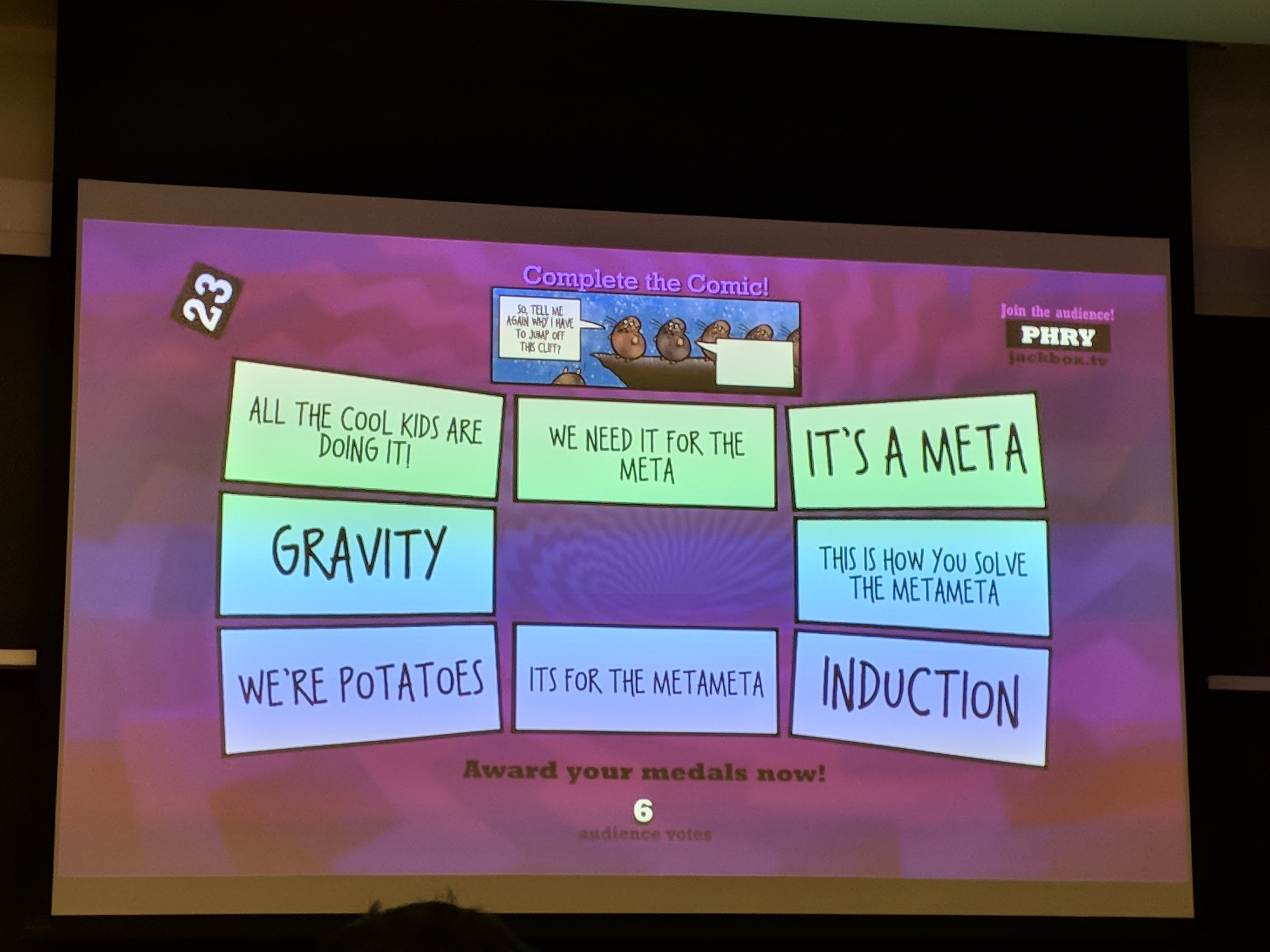

When Twitch Plays Mystery Hunt came out, I got the impression that literally every local solver started working on it at once. As a remote solver, I unfortunately didn’t get to participate, because all the coordination was getting done in person. I did get to see plenty of memes and copypasta.

The first “intentionally left blank” puzzle we unlocked was Lycanroc, which confused us for a while. Lycanroc is literally a werewolf, so we thought we had to wait until night for the puzzle to change form. It was a sketchy theory, but we didn’t have anything else. I believe the metameta structure got figured out when somebody noticed the Fire transformation. Once we had that, we got the rest in a matter of minutes. This led to an amusing statistic: our best “solve” time was 6 seconds.

Some people on our team got very, very excited about X Marks the Spot. I didn’t work on it, but as a Euclidean geometry fan, I approve.

The Scouts was the last meta we solved in the Pokemon round. We only solved it after the metameta, when people working on Hacking were looking for things to do. I made a comment about “badges” early on, but it took us a while to discover the puzzle titles were relevant.

When I woke up on Saturday morning, I saw in the chat that Pestered was a Homestuck puzzle. I thought I missed it, but turns out it was stuck on extraction. I didn’t figure the extraction either, and it was the final puzzle we bought. We got tripped up on two things. One, we were certain that we needed to use the DNA pairs of the trolls again, using the zodiac signs to get an ordering. Two, the last page of The Tech from the Thursday before Hunt had horoscopes that looked a lot like puzzle data. Turns out it was a massive, unintentional red herring. I wonder if HQ was confused by our GRYFFINDOR guess.

The first puzzle I worked on in Hacking was Bark Ode. The most amusing part was easily the time where I had to mark a square as ? because I couldn’t figure out if it was a dog or not. I then looked at Voter Fraud, but didn’t do much for it, besides noting it was probably a voting systems puzzle.

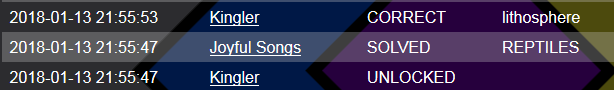

I planned to work on Murder at the Asylum, but some local people were solving it at a chalkboard, which didn’t work for remote solvers. So, instead I looked at the Scout meta. Someone else made the key observation that TENDINOPATHIES was likely after a chute and overlapped with EXTEND. There was only one place on the grid that supported that, and with another location guess, we got EXOS as a partial, which was enough to solve the meta. I then worked out constraints on the 3 missing answers, got my work checked, and backsolved Murder at the Asylum before they could forward solve it. Revenge of the remotes!

That ended up being my final contribution to the Hunt. By the time I woke up on Sunday morning, we only had metas, physicals, and abandoned puzzles left. I did some work on drawing out the connectivity graphs for Flee, but that was about it. We did run into one red herring: BIOFUEL TORCH was a tool, BACKUP PLAN sounded like a punt, and EXOSKELETON was a plausible hack, which drove us to think about “Hack, Punt, Tool” for a bit. Somehow, despite never being an MIT student, I still got this reference. The fangs of MIT culture run deep…

I’ll end with a question: what do people think about after finishing a Mystery Hunt?

See you next Hunt! Congrats (and condolences) to Setec.

-

So There I Was, Buying My Little Pony Merchandise in Italy

CommentsFor Thanksgiving, my family went on a trip to Rome. It was pretty cool! There’s something special about visiting a city that is literally thousands of years old. It is one thing to read about the Renaissance, and it is another thing to realize that the sculpture in the town plaza predates the Declaration of Independence by over 300 years, and it’s right there.

The one downside is that although my parents liked the scenery, they weren’t the biggest fans of Italian food. They didn’t dislike it, but, well, let me put it this way: the only restaurant we visited multiple times was a Chinese restaurant.

To be fair, the Chinese restaurant catered to Chinese exchange students, meaning their Chinese food was authentic and actually pretty good. Still, I wasn’t expecting to eat Chinese food in Rome, and I especially wasn’t expecting eating Chinese food multiple times in Rome.

But I digress! During the trip, we visited a supermarket to buy some fruit. While there, a family member pointed out these boxes.

I was against buying it. They argued the following.

- According to the packaging, there was “a surprise inside”.

- It only cost 2 Euros.

- Buying MLP merchandise in Italy is intrinsically funny.

I bought two boxes.

* * *

Let’s take a closer look. The front and back have the same picture, but the two sides are different.

The first thing I noticed was that the character art looked…off, somehow. In what world does Fluttershy wink like that? In what world does Twilight smirk like that. Expression-wise I’m pretty sure neither has made that face in the show, and it feels out-of-character for both of them. Fluttershy seems too reserved for a playful wink. Twilight seems too nice for a knowing smirk.

Rainbow also looked off, and after staring at it a while, I figured out why. Normally her mane hangs over her left eye, but here it hangs over her right.

Literally unbuyable. I’m only kind-of joking, actually. Look, here’s my view: fans have the right to call out shitty merchandise. Like, if you’re going to monetize your show, you should at least have your merchandise reflect what your show looks like! Don’t even tell me that the target audience of young girls won’t care. I suspect the target audience does care. One, I believe children are more perceptive than people think. Two, when you’re a kid, you don’t have as much going on in your life, which makes it easy to obsess over the details of your Saturday morning cartoons. The moment they changed Ash Ketchum’s voice actor in Pokemon, I was done with that show.

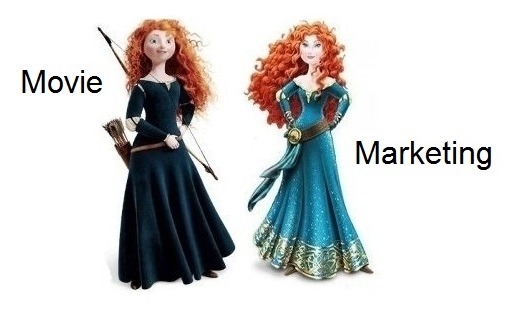

Take Brave as a case study. The goal of Brave was to create a Disney princess that avoided the gender stereotypes of the old Disney princesses. The movie succeeded. The marketing didn’t.

The marketing made Merida slimmer, made her a bit older, removed her bow, and made her hair less curly. Anecdotally, my Facebook feed had a few comments from parents, saying their daughter didn’t like it because “her hair wasn’t as curly”, and “it’s not the same Merida as the movie.” (You can read comments from Brave’s director here, if you’re interested in further ranting.)

The details matter. Don’t mess them up.

* * *

Opening the box produces this.

What looked like a chocolate muffin, and a few pony cards. The chocolate muffin…was not that good. Derpy would be ashamed.

Okay, so it’s not actually a muffin. It’s “panettoncino with chocolate chips”, But my headcanon is that if Derpy can make good muffins, she can make good panettoncino too.

Let’s take a closer look at these cards.

(Card fronts.)

(Card backs. It’s identical for all of them.)

Awwwwww, they’re so cute! I have no issues with the art here. But wait a second, there’s only 5 cards. Isn’t it supposed to be the Mane 6? Where’s Applejack? She’s not on the front. She’s not on the back.

Speaking of which, is Applejack even on the box?

Scroll up if you want. She isn’t. Applejack doesn’t appear anywhere on or in this box. Every other pony in the Mane 6 is there. The top bar has plenty of side characters.

…and no Applejack.

Strike the “Chinese food in Rome” thing. This right here is a What the hell were you thinking? moment. Applejack certainly isn’t that popular, but come on, she’s part of the main ensemble! There are plenty of Applejack fans!

This is funny at a meta-level, because people like to joke Applejack is “the best background pony”, but it’s sad for fans that have to see her getting treated as a second-level citizen. Either this is a mistake of colossal proportions, or Applejack is just that unappealing of a character. I’ll be generous and go with the former.

I’ve bought other MLP merchandise, but it’s primarily been fan-created merchandise. Fan merchandise tends to match the quality I expect, because it’s made by people who actually care about getting the details right.

Look, Hasbro, just do it better next time, alright?

-

The Research Tax

CommentsWritten quickly. Close to stream-of-consciousness.

If you haven’t kept up with recent news in the intersection between academia and politics, here is the short version: the currently debated GOP tax bill significantly increases the tax burden on graduate students, and it just passed the House.

This has been covered by Scott Aaronson, Luca Trevisan, and others. The below is a summary of information from there. Skip to the next section if you’d just like my opinion.

Currently, university’s handle PhD student tuition like this.

- The graduate student pays \(\$X\) as tuition.

- The university waives \(\$X\) of the tuition.

- The university then pays a graduate student stipend of \(\$S\).

- In the current system, stipend \(\$S\) is taxed and the waived tuition \(\$X\) is not. The student only ever receives \(\$S\) - the \(\$X\) is essentially invisible.

Under the new GOP tax bill, the waived tuition \(\$X\) will be taxed. This is a double whammy, since not only does it increase the total amount of taxable income, the increase is enough to push several students into the next federal tax bracket. A more detailed breakdown can be found here. The linked analysis shows that if nothing else changes, a typical in-state Berkeley PhD student would pay about $1400 more tax, and a typical MIT PhD student would pay about $9500 more tax. These are rough orders of magnitude for how it affects public universities vs private universities, with more damage to private universities because they have a higher tuition. Importantly, students would not get taxed because of a larger stipend - they would be taxed on money that has never entered their pockets in the first place.

As for why universities can’t simply declare that grad student tuitions are \(\$0\) - there’s some accounting trick that lets the university get more money if they give tuition waivers to grad students. I haven’t looked into the details of this.

* * *

I’m currently not in academia, for several reasons, but the big one is that I got a job offer from an industry research lab with interests close to mine. I’m certainly giving up some things, but the trade-offs fall in favor of me staying out of academia.

If I had gone to academia, I would have been okay financially, thanks to several lucky breaks. I was born in an upper-middle class family, the kind that doesn’t spend a lot of money but has money to burn. I had natural interest in math and computer science, and turns out the world’s willing to pay those people quite a bit if they enter finance or software. I liked algorithms, which happened to be the weird test of software engineering prowess in Silicon Valley - the only reason I got my first internship was because I knew the pseudocode for Dijkstra’s algorithm. And although part of my heart will always belong to the beauty of proofs, I tolerated systems enough to pick up the skills that let me handle industry.

Overall, I’ve lived a privileged life. That likely wouldn’t change in academia because CS PhDs have it easier than other departments. With careful spending, I think I could intern at a tech company some months of the year, use the money from that to fund research for the rest of the year, and still end net positive.

The thing is, these policies aren’t crippling to people like me. They’re crippling to the less fortunate.

I can’t speak for other fields, but academia for CS is increasingly a rich person’s game. Any strong PhD candidate could be at least an average software engineer, and that’s a lot of money to leave off the table. I’ve read an anecdotal story of a promising research, first to go to college in her family, and she laughed at the thought of going for a PhD. Her parents had done so much to support her. It would have been too selfish to turn down a well-paying job that could let her start paying them back.

Across all fields, the tax bill would essentially do the same: make academia more of a rich person’s game. The reason the news bothers me so much is that if it goes through, there’s going to be so much unfulfilled passion, so many students who can’t let their research interests override financial realities. It’s a duller, less colorful world.

* * *

To play devil’s advocate, the analysis above assumes nothing else about the world will change. This is very unrealistic. If the tax bill goes through as is, universities will certainly adjust - ask for more donations, decrease tuition, and make up the accounting shortfall elsewhere with even more creative costcutting. The actual tax increase would likely be lower than the current numbers.

However, I have a hard time believing that universities will be able to make up all the difference. Universities certainly have bloat, and a reduced budget provides a very strong motivation to identify that bloat - but based on what I’ve heard about university financials, I’m not convinced there’s a lot that can be cut without a fight. There are some damning numbers showing that administrators are taking up an increasingly large share of university budgets, but I’d guess that you can’t just layoff a ton of admins and expect the university to put itself back together in a reasonable timeframe.

The top-tier universities can weather this better. The lower-tier universities, less so. It’s the same rich person’s game - universities that already have trouble with recruiting grad students will have even more trouble recruiting grad students. The conclusion is similarly disappointing.

* * *

Throughout this post, I’ve been assuming academia is intrinsically valuable. That’s certainly up for debate. One argument I’ve seen is that outside of the top-tier universities, academia is a net-negative pursuit, and it would be better for society if lower-tier schools were priced out of relevance. Given the latent misery and stress of academia, and the constant self-doubt researchers have about the relevance of their own work, I think it’s worth considering this argument seriously. However, debating the merits of academia is out of the scope of this post.

To funnel everything back into RL terms (since I’m “that RL guy”) - I see academia as the ultimate extreme of the exploration-exploitation tradeoff. Industry is content to do what works, industry research labs can be more exploratory, and academia gets to consider crazy ideas that may not be relevant for decades. In my ideal society, there are always people taking crazy ideas seriously. And I mean that in a good way! Some nuts talking about water memory, some other people trying to quantify the odds we’re living in a simulation, a third group advocating that we spend the next 50 years building a model of all of ethics. The strength of academia (and the argument for tenure) is that it lets you do these things if you care about them enough.

Somewhere out in the world is a cohort of Medieval Studies PhDs, and I feel very safe saying that little of note will come from there in the next 25 years. But that doesn’t mean I want them to disappear. Do you know how insane you have to be to want to do Medieval Studies? Like, holy shit, you really really really really have to like the subject to want to spend your life doing that. How is that not crazy awesome?

The world should have room for people like that. I’m worried it won’t.